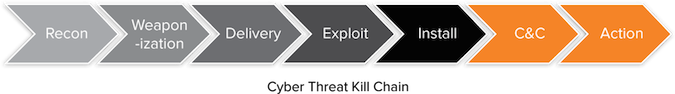

In 2011, Lockheed Martin analysts introduced a seven step intelligence-driven process generally used by bad actors, and called it the “cyber kill chain”. For those who could use a recap, the seven stages are:

● Reconnaissance: Identifying targets, gathering data (including spear phishing/social engineering attack research) and evaluating structures.

● Weaponization: Identifying vulnerabilities, creating/finding exploits, and developing a manner of infecting targets.

● Delivery: Transferring exploits onto target devices.

● Exploitation: Executing the exploit code (often in a multiple phases), and attempting to go undetected by breach defense systems.

● Installation: Creating a backdoor in order to persist on the network.

● Command and Control: Enabling communication between the exploit and its network so it can receive instructions.

● Action: Carrying out the primary purpose of the attack, which can include stealing money and intellectual property, destabilizing a competitor, and so on.

While interesting to analyze — especially when reflecting upon how much has changed over the years — the purpose of the cyber kill chain was not merely to illustrate what today’s bad actors are doing. Rather, it was designed as a framework to help enterprises improve their network defense systems, so they could proactively remediate threats and improve their preparation for future attacks. At least, that was the vision. The reality, however, has not been as inspired.

Here we are a little over three years later, and despite the fact that the phrase “cyber kill chain” is embedded in the cyber security vocabulary, many enterprises are still not proactive about keeping their assets, data, and reputations safe from bad actors. Instead, their approach continues to be reactive.

Why is this happening? It is not because many enterprises take cyber security lightly — at least not anymore, with costly infections making the headlines on what seems like a daily basis. Rather, it is because they want to mitigate the risk of data loss; a risk that increases as the cyber kill chain plays out into its later stages. As such, they are hitting the stop button as soon as they think they detect an exploit. And while this reactive stance appears to be halting threats in their tracks and keeping enterprises safe, the reality is quite different — and much more dangerous.

In trying to prevent known threats from advancing to the later stages of the kill chain, enterprises are cutting themselves off from critical intelligence that would help them identify unknown threats, which are mainly those that have slipped past their traditional breach defense systems and are either actively stealing data, or lying low and awaiting further instructions from a command and control server. So we could say that paradoxically, in an effort to stay safe, enterprises are putting their data, assets, IP, and reputations in harm’s way, and tacitly informing bad actors throughout the cyber underground that their network is “open for business.”

The good news, however, is that enterprises can resolve this paradox without re-inventing their network security architecture by looking at the end of the kill chain. One technology-led way to achieve this goal is by regularly analyzing outbound traffic to identify anomalies and pinpoint compromised devices — but before the compromise becomes a breach; not after. Once equipped with this critical threat data, enterprises can automatically feed it earlier in the kill chain to remediate the threat, and ensure that a similar attack does not happen again.

Ultimately, by looking at the end of the kill chain (e.g. by analyzing outbound traffic), enterprises can turn their obsolete reactive approach into a relevant proactive stance — which, frankly, is the only one they can afford to take if they want to reduce risk, protect their assets, and stay at least one step ahead of the bad guys in an ever-worsening threat landscape.