Figuring out what “normal” means is one of computer security’s great challenges. Everyone seems to think that if we only knew what “normal” is, we’d be able to subtract it from what’s going on around us and “abnormal” would magically fall out the other side. Unfortunately, it’s not that easy.

In statistical terms, we can equate “normal” with the mean or average, but that still leaves us with a huge number of outliers on either side of the mean. For a metrics program, we’re more interested in knowing how we compare with industry norms, which is another whole pot of spaghetti because most industries don’t publish metrics and those metrics probably can’t be generalized.

For example, imagine a security metrics nerd from a bank talking to a security metrics nerd from a hotel, and the hotelier says, “I had an abnormal surge of attack traffic, yesterday: 3,000 attacks per day instead of my usual 1,000.” The bank nerd might be horrified because he is thinking about the typical attack rate on his internal network, while the hotelier forgot to mention that he’s referring to their guest services network that is full of transient traffic and uncontrolled users.

They also have not communicated any context about the size of their networks in question; 3,000 attacks/day on a network with 10 users is very different than the same number of attacks on a network of thousands of systems. That’s a slightly contrived example, but it illustrates the point: to communicate about our metrics, we need ways that we can ground our experience in terms of “normal” for us; then we can talk with someone else about how our current experience deviates from our usual experience. Otherwise, we really can’t communicate our metrics effectively with anyone who isn’t in a similar environment.

You need to bear this in mind while establishing your metrics: you either need to figure out how to normalize a metric so it’s contextually relevant to your audience, or you need to decontextualize the metric. That’s why you see a lot of metrics presented as such-and-such per capita; the premise is that the such-and-such rate is fairly constant among individuals, and the population size doesn’t matter because it has been factored out.

If you think about that, sometimes that makes sense but other times it may be deceptive – edge-cases where the population or the such-and-such rate are small won’t generalize well.

For example, suppose our hotel network manager tries to communicate about the jump in the observed attack-rate: “Our attack-rate has jumped from 2 attacks per desktop/day to 20!” That metric appears to be more generally applicable because it factors out the size of the network. The bank security nerd can think, “Well, I have 0.1 attacks per desktop/day” and believe that the bank is relatively better.

The problem is that we still don’t know if the attack-rate is dependent on the underlying number of systems, or if the hotel network manager compiled metrics by including attacks against their Internet-facing website. In other words, it’s always crucial to provide metrics that encourage apple-to-apple comparisons.

Remembering back to the third installment of this series, when we defined a metric as “some data and an algorithm for reducing and presenting it to tell a story,” you always want to include some of the back-story.

You may also want to include a value judgment to help readers interpret the significance of changes in the measurement. For example: “higher is better” or “values near the average indicate the machinery is functioning correctly.” In general, humans like charts that go up, where “up” equates to “good” — so you may want to use color or a label to indicate if that is not the case. Also consider that some metrics may fluctuate a lot, whereas others may be fairly stable, and indicate a departure from normal if there is a jump or drop in value. If you have an idea about what the expected ranges are, put that directly on your chart.

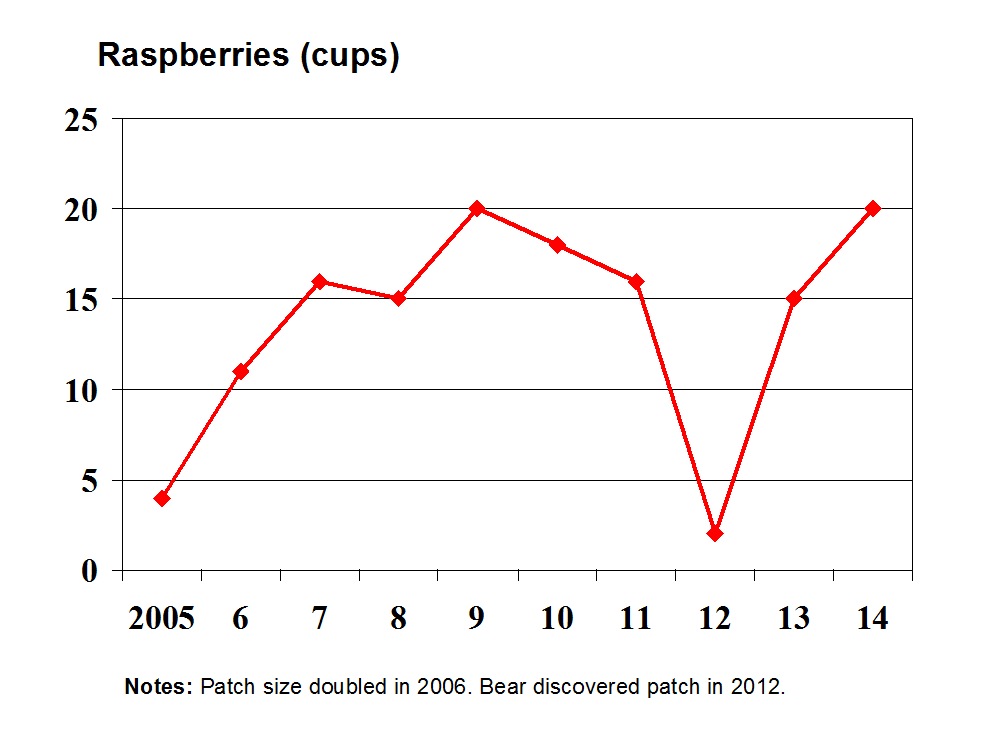

In the chart above, I did not include an average or expected value bars for two reasons: first, I can’t really say anything about expected yield because I changed the size of my raspberry patch in 2006. Also, the average yield wouldn’t reveal anything useful other than “every year is an improvement when a bear doesn’t mash your raspberry patch flat.”

Stock market metric nerds spend a lot of time trying to represent normal fluctuations of certain stock prices. For that purpose, they use several ways to show the average or average fluctuation as a leading indicator. It’s helpful if you’re trying to argue that a metric has predictive value, and shows some kind of a relevant trend. One reason it’s important to include that kind of information for stock-pickers is because they aren’t really interested in the long term performance of a given stock in past years – they are trying to figure out where it’s heading next week or over the next year. So if you look at a chart about Cisco Systems stock and you’re dialed all the way back, your eye can easily pick out the long term trend line:

A stock chart (source: Yahoo! Finance) showing fluctuations in a stock over 3 months

Because we are “zoomed in” on a smaller period, the simple moving average calculation (red line) may be useful to indicate a short term trend.

The same stock (source: Yahoo! Finance) showing its performance during the entire time for which data is available

Here you can see that the moving average calculation doesn’t really indicate much, except that the overall trend was headed where the stock was actually going.

Choosing the right scope for presenting your data is a crucial decision, since you can easily manipulate your audience’s perception of metrics by zooming in inappropriately, or zooming out to obscure short term fluctuations. In the raspberry chart example, I could hide the incident with the bear by zooming out to the date when I moved to the farm (2003), showing several years of “zero raspberries” until a sudden, dramatic surge when I actually planted the raspberry patch. In that case, the trend line would look very positive!

If you’re just looking at the close-up performance for the last month or so, moving averages serve as an indicator of the overall trend line.

Lessons:

• When producing metrics that may be used comparatively, always try to provide underlying information that may have been factored out, so that anyone using your data can match their data to it.

• A blog that often contains inspiring metrics is Paul Krugman’s blog on the New York Times website. Whether you agree with his politics or not, you’ve got to admire his ability to use existing data to tell a story.

• Sometimes you need fences that can keep out more than deer.

Next up: Visually presenting a metric, and why the pie chart is evil