Offensive security as a category has blown past its tipping point, to where it’s in danger of becoming one of those overused terms that means everything and nothing. But from the early days of “Hack Back,” to Red Teams, where did the offensive cyber mindset first take root, what does it really mean today, and in the age of AI, where is it going next?

For many of a certain age, “Offensive Security” had the more aggressive “Hack Back” connotation, essentially dealing in corporate computer vigilantism. Using the same Tactics, Techniques and Procedures (TTPs), teams would counterattack criminals – many times also resulting in collateral damage on innocent systems. It elicited images of shadowy government entities, or even large corporations who had “Labs” teams abroad in countries outside the purview of the US Computer Fraud and Abuse Act (CFAA). While “Hack Back” most certainly still occurs, the legality and ethics keep it limited in scope. However, the concepts, knowledge and use of offensive TTPs to assess and stress test corporate defenses, has gone mainstream. The reason for this is that defense is really hard, and in many cases doomed to fail, repeatedly.

“Offending” sensibility

The security industry is cyclical and predictable. Once a technology problem or type of attack is identified, there is a gold rush to monetize the opportunity as quickly and profitably as possible. While this drives innovation, it also sows confusion, as new vendors strive for recognition and incumbents muddy the waters until they can adapt. As solutions of varying quality fight for attention, many push automation as offering a security “easy button” that claims to mount a defensive or offensive strategy with little human interaction, and easy adoption. While heavy automation and low friction are attractive in concept, it can become costly in its execution. Automation depends on precedent, and most cases pre-existing victimization. It also builds a defense incrementally and linearly based on this knowledge, while attackers are rapidly innovating in parallel.

Security in practice is never “set it and forget it”. The speed at which criminals adjust to defenses keep them a moving target. Leaning on purpose-built, automated platforms can’t keep pace, especially at speed and scale, simply just delaying inevitable, and potentially catastrophic, compromise. This is exacerbated by an ever-expanding and increasingly interconnected corporate ecosystem – networks, endpoints, Cloud, applications, IoT, etc. These elements must be assessed individually, but also evaluated in the aggregate surface they present.

Offensive Security turns focus away from the attacker or attack of the month, and looks inward at the organizational ecosystem, acknowledging at the outset that that not everything is an APT, not every organization is a nation state target, and not every attack is straightforward or based on a technical vulnerability – or even necessarily complex. Consider:

- Ransomware is fast and noisy, and about volume, shame and/or destruction.

- Phishing and Social Engineering are about subtlety, often for theft of everything from financial assets to IP

- Cloud attacks run the gamut from low hanging misconfigurations to supply chain infiltrations

- And for many actors, attacks will combine an array of methods, targets, and in some cases even goals.

In contrast to the majority of defensive approaches and even some attack simulation offerings, Offensive Security does not focus on discreet attacks, singular actors, or Indicators of compromise, but understands the entirety of both sides of the battlefield – organizational assets and attack surface, and the threat models that map to that. In this way Offensive Security provides an opportunity to outpace attackers by pre-emptively and methodically taking away attack paths and frustrating an attacker into inaction, or into a limited compromise that cannot spread.

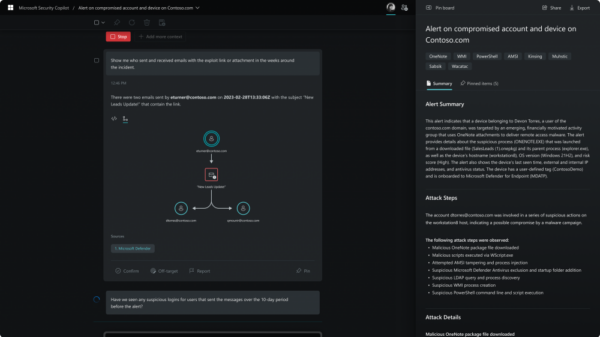

It’s here that we need to emphasize one of the critical differences between defensive solutions and true Offensive Security approaches. That difference is that Offensive is both about innovation AND intuition. Much like malicious actors, offensive security requires the speed and agility of human intelligence to anticipate and adapt. For Offensive Security to excel, it cannot just have humans who manage and monitor technology, but have technology that amplifies and augments elite human talent. A strong combination of technology and humans is the most effective approach for assessment. Technology can rapidly identify, analyze and filter, and humans can connect dots, validate, fix and affect continual improvement and advancement. At its most comprehensive, an Offensive Security program will incorporate attack emulation with defensive assessment and penetration testing to eliminate or minimize the attack surface before attackers have a chance to assess and act. This is known as Purple Teaming as it incorporates offensive techniques (Red Team) with defensive concepts and mechanisms (Blue Team).

Automate This!

However we come not to bury automation, but to praise it. While overreliance on automation can lead to oversimplification and underestimation of risk, it also can be a powerful ally. For the aforementioned human teams, intelligent and focused automation is a force multiplier. This is where the promise of Artificial Intelligence (AI) can, and in small ways already is, changing the game. While Large Language Models (LLMs) are getting exponentially more adept at comprehension, they still are and ultimately will be for the foreseeable future, dependent on the human intellect – or suffer from the lack thereof. Beyond that, LLM output will always require human review and validation. Qualifiers aside, AI is already playing a substantial role in attack emulation – from development of phishing campaigns to exploits and tools. But it’s only as good and effective as what it is fed.

The timeless computing concept of Garbage In, Garbage Out remains undefeated. Blind trust in AI is like trying to sustain a healthy diet on junk food. We need to fully understand and use the best ingredients and the strongest cooking methods, and also continue to account for changes in our own organizational “physiology” to ensure a long and productive life.