The Time to Move to a Narrative-driven Model is Long Overdue

The concept of moving to a narrative-driven model for security operations is something I’ve written about in the past. Those that know me or have seen me present this topic know that it is a topic that I’m passionate about. For those that aren’t familiar with the narrative-driven model for security, I’ll briefly explain the concept at a high level as an introduction to this piece. For the reader interested in additional background or depth, previous pieces I’ve written for SecurityWeek and other publications go into far greater detail than I am able to go into in this introduction.

The current security operations model is an alert-driven one. Most organizations suffer from alert fatigue. They find themselves inundated with far too many alerts, each of which has far too little context. The ultimate goal of a security operations function should be to review each alert and make an informed decision about what, if any, response is necessary. As we all know, the current state of affairs is far away from this ideal state.

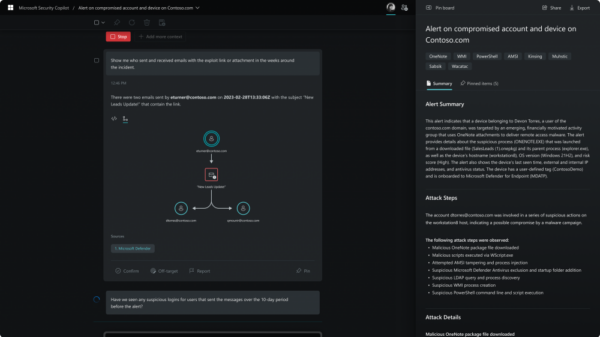

The narrative-driven model involves feeding the work queue with better content, and subsequently enriching that content with additional context. What results is a queue of painted pictures and assembled puzzles that can be presented to the analyst to facilitate more informed decision-making. That is the narrative-driven model boiled to its essence.

I often present the narrative-driven model when I speak publicly, and more often than not, people want to know how they can build the narrative. I discuss this during the talks I give on the topic, but for the benefit of everyone, I’d like to list some of the steps, at least a high level, in this piece.

In my experience, building the narrative can be boiled down to a nine-step process, at least at a strategic level:

● Identify risks, goals, and priorities: In order to assemble the most relevant narratives, we need to understand what risks and threats the organization is most concerned about. After all, the goal of any security program should be to strategically mitigate risk. Risks and threats can be prioritized and further broken down into goals and priorities. We can use this prioritized list to guide us through the remainder of the process.

● Identify gaps in telemetry: Having the right data is a key component of building the narrative. Simply put, if we are to have any chance of painting an accurate picture of what happened, we need the data to support that effort. Of course, different data sources will have different value and relevance to this effort. It is on us to identify the most valuable and relevant data sources, and to subsequently identify gaps in visibility. Any blind spots should be filled in with the appropriate instrumentation, be it network, endpoint, mobile, or log-based.

● Develop content: Once an organization has the appropriate visibility, attention turns to properly leveraging that visibility. Content should be developed so as to alert on all of the activity that matches the list of prioritized risks and threats created during the first step, and none of the activity that doesn’t. Why alert on something that you’ve already decided you aren’t concerned about? That will just create additional noise and complexity.

● Improve signal-to-noise ratio: Almost all organizations today suffer from alert fatigue. There are simply too many alerts and too many false positives. This creates a tremendous amount of noise that makes it impossible for organizations to review every alert. This results in important alerts being overlooked due to them being lost in the noise. Throw out the default rule set in favor of a small number of more reliable, higher fidelity alerts based upon the content developed in the previous step.

● Concentrate into unified work queue: Most people cannot concentrate on multiple things at the same time. If they can, they certainly can’t give six things the same dedicated attention they can give to one thing. Focus scarce analyst resources on a single, unified work queue where all alerts flow. This ensures that every alert is reviewed and facilitates the next few steps that build the narrative around the alert.

● Enrich with supporting evidence: Most of the time, we know what supporting evidence we need to add to an alert to bring it additional context. For example, we almost always identify the user, identify the asset (or assets), along with several other common procedural steps. Rather than doing this work manually, why not automate it to enrich the alert with the supporting evidence it so desperately needs before it even hits the work queue?

● Automate common analysis steps: I’m often amazed at how, for most alerts, somewhere around 80% of the queries run and analysis performed manually during alert qualification and validation are nearly identical. Automating these steps allows for a continuation of the enrichment that began in the previous steps. For example, supporting contextual information can be pulled from network forensics, endpoint forensics, mobile, as well as various different log sources. In addition, multiple different alerts can be brought under the same common storyline as we make our way towards building the narrative.

● Interleave intelligence: Once we have most of our story together, we need to understand a bit more about the nature of the threat we’re dealing with. This allows us to make a far more informed decision about the type of response (if any) that is necessary. For example, are we dealing with a routine mass malware type of infection, or is this something far more targeted and worrisome? Or, as another example, is a particular repetitive network activity caused by a misconfiguration, or does it match a pattern often used by a specific attack group. Intelligence about the type of activity we’re building a narrative around can help us better understand the answers to these questions.

● Present the narrative: In place of a voluminous queue of alerts, present the team of analysts with a reasonably-sized queue of narratives. Instead of hundreds of thousands of context-less alerts, the team receives perhaps a few hundred narratives. Each one is reviewed by an analyst, and because of the additional context, far less work is required for the analyst toward the end goal of making an informed decision. Detection is greatly improved, as alerts no longer fall through the cracks or fly under the radar. Analysts spend less time waiting for queries to return, making them far more efficient. Response is much more rapid, as the time to an informed decision is greatly reduced.

Many organizations continue to suffer from alert fatigue. Those same organizations find it very difficult to make informed decisions in a timely manner. The alert-driven model for security operations does not provide an adequate framework to support timely, actionable, and informed decisions. The time to move to a narrative-driven model is long overdue.