Are You using the Right Application Assessment Tools in the Right Place at the Right Time?

To develop any program, you always start by performing an assessment of your current situation in order to establish a baseline of where you stand. In developing a secure development lifecycle, this is no different. Many companies begin the baselining process with an assessment of one or more applications to determine the level of risk posed by your applications. Naturally, people first look for tools that can “perform an assessment.”

Types of Tools

Generally, there are two different types of application assessment tools on the market – static analysis tools and dynamic analysis tools. Static analysis tools (e.g. Fortify SCA, Armorize CodeSecure, etc.) are used to scan application source code (or, in some cases, a representation of it) for vulnerabilities. Dynamic analysis tools (e.g. HP WebInspect, IBM AppScan) are used to scan live applications such as a web application or a web service.

For a long time the vendors in each camp battled it out, and to a degree, they still do. Static vendors would claim the best way to find vulnerabilities in an application was by performing code level analysis, while dynamic vendors made the claim that what you really needed was something that worked against a live system because that’s what attackers were doing.

Then there were consultants who claimed tools were stupid and the best work was always performed by consultants. I remember one particularly horrific presentation in which the consultant was trying to explain to an audience how they once found four times as many cross-site scripting issues as a tool would during an assessment. Based on this single experience, everyone was encouraged to stop using of tools and use humans to assess applications instead.

They were all right, and they were all wrong.

When Humans Succeed!

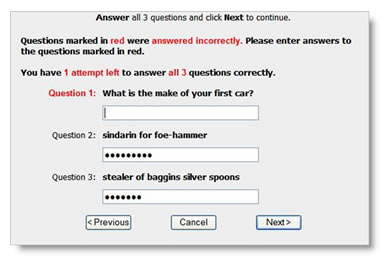

Let’s begin with a simple example. Below is a cleaned up version of a vulnerable password reset webpage that I found during an assessment a couple of years ago. Can you name three things that are wrong with this picture?

First, the web application tells the user which questions have been answered correctly. Second, the last two questions are not even questions. The application allowed the user to define their own questions, assuming that the users will know how to define security questions the right way . The answers to the last two questions can be easily Googled. Finally, the first question has a rather limited set of potential answers as there are only a handful of car manufacturers. A question with a larger set of potential answers would be, “What street did you live on in third grade?”

Even if we combined all the best commercial static and dynamic analysis tools, they would never find the problems of the security questions above. That is, until computers learn to read (all hail our robot masters). There are more issues, which will be left as an exercise to the reader.

If static and dynamic analysis tools can’t find the critical design flaws above, then how are they found? Well, the same way that we just found them – using humans. To identify design flaws (and more complex vulnerabilities) it’s necessary to have a human in the loop. In my personal experience, design flaws make up between 30 to 40% of the critical issues that are identified during our assessments .

If your organization has made the mistake of designing a secure development lifecycle around the use of a tool, then you may be missing critical design level flaws. An application architect with proper security training, adhering to secure design best practices, should be in a position to eliminate a poor design such as the one above. By eliminating the poor design before it is implemented, you avoid the time-consuming activity of code refactoring.

But this doesn’t mean that humans are infallible. On the contrary, humans have a long and well-documented history of failure.

When Dynamic Tools Rule.

When do people fail? All the time—as do static analysis tools.

There are many scenarios where humans simply cannot keep up with technology. The simple fact is, that for many vulnerabilities, the best way to locate them is using tools. Take for example the identification of directory indexing. Directory indexing is a situation where a web server has been improperly configured during deployment and it allows users to view a listing (or index) of the files within the directory.

There are many scenarios where humans simply cannot keep up with technology. The simple fact is, that for many vulnerabilities, the best way to locate them is using tools. Take for example the identification of directory indexing. Directory indexing is a situation where a web server has been improperly configured during deployment and it allows users to view a listing (or index) of the files within the directory.

When humans find this issue it is usually by chance. It’s possible, however, to find this problem by systematically crawling through the web site and truncating the path in an attempt to find directory indexing. For example, if a page exists at http://www.vulnerable.com/resources/files/userguide.htm then an attacker might try to identify and access the directory listing by removing (truncating) userguide.htm from the URL and submitting only the directory: http://www.vulnerable.com/resources/files/.

Having to do this for an entire website? Haha. Depending on the website, it might take days, months, or even years for a person to perform these checks manually. Humans fail.

Clearly, the solution is to automate the process across the many tens, hundreds, and sometimes even thousands of potential requests this comprehensive undertaking would require. What would cause an expert to die of boredom could be accomplished by a tool in a matter of minutes.

It’s also important to keep in mind that a static analysis tool would never be able to find this issue because it only searches for vulnerabilities with source code. This particular vulnerability is introduced to an application when it is deployed on an improperly configured web server. So, no matter how many static analysis tools you ran against the application, this would never be found.

There are many issues like directory indexing where humans and static analysis tools fail, and dynamic scanning tools reign supreme.

Static Reigns Supreme!

The type of vulnerabilities where static analysis excels is when looking for vulnerabilities that require examining data flows within an application. For example, trying to identify SQL injection bugs (a data flow issue) in an application that has complex workflows, such as the steps to create a personal profile.

Let’s assume that there’s a critical SQL injection bug, but it can only be found at the very end of a series of profile setup pages and the vulnerable feature only appears if you’re a non-smoking, dog lover, who wants kids. What this means from a technical standpoint is that a series of very specific options have to be selected from an extremely high number of possible permutations to expose the vulnerability through the web interface. It would be infeasible for dynamic scanners to test every possible permutation of options and checkboxes in an attempt to cause the application to expose the vulnerable feature, much less perform the testing required to identify the vulnerability. In any moderate to high complexity workflows, testing each branch cannot be performed by dynamic scanners because there are simply too many permutations.

In order to identify this deeply embedded issue, you must analyze the code using static analysis tools. The static analysis tool would be able to trace through the code, create a map of the data flow branches, and determine which paths would lead to the embedded SQL injection point. Visually, it would look something like the following:

Of course, a human could identify the SQL injection vulnerability by either working through the workflows or by reading through the code, but it would take an inordinate amount of time to do this against any reasonably sized codebase. When reviewing code, the human brain essentially mimics the behavior of the computer as it processes code while simultaneously having to act like an attacker trying to break the code. This is extremely time-consuming, and humans are almost always the costliest resources. The economics of a fully manual code review usually rule it out as an option for the most critical applications.

In this case, the best tool for the job is a static analysis tool.

When to Use Tools and Humans

Let’s take what we’ve learned and summarize it into a nice table:

Dynamic analysis tools are well-suited for finding issues like directory indexing, but will fail at finding an embedded SQL injection issue and secure design problem. Static analysis tools can help identify issues related to data flow, but fail at finding deployment type issues and design problems. Humans can do it all, but you probably won’t be able to get them to do it quickly or willingly unless impressment comes back into fashion. You want to use the right tool for the problem.

In addition to the above, we also know that there’s a certain time that you want to use these tools. Applying everything at the very end of the software development process probably isn’t the best use of your time and effort.

Security question issues are a design level problem. It’s best to apply human analysis to find these types of issues during the design phase of the process. On the flip side, it would be silly to try to run a static or dynamic analysis against the application at this point because the application code isn’t deployed and the site isn’t running.

SQL injection vulnerabilities are introduced when the design is implemented in the application code, and the construction of the SQL query isn’t properly performed. Static analysis tools run during this coding process can eliminate the issues early on before more code is built around or using the vulnerable component. Still, you can’t run the dynamic tool because the application hasn’t been deployed.

Finally, directory indexing issues are introduced when the application is released and deployed onto a misconfigured web server. Trying to identify it before this point would be futile.

Summary

So what does all this mean? You need to use the right tools in the right place at the right time.