Biometric Authentication is No Longer Just the Stuff of Spy Movies or Reserved for Military-Grade Installations

According to a 2018 report from the Center for Identity at the University of Texas, the rate of adoption for biometrics has accelerated over the last five years. That shouldn’t come as a surprise. In the age of digital transformation, organizations are now looking for innovative and secure solutions to authenticate users seeking access to resources. After all, accurate, reliable authentication is critical to managing the digital risk that comes with having an unprecedented dynamic and diverse workforce connecting to numerous apps and other resources. As identity management becomes more complex, biometrics has quickly entered the scene as organizations look to enhance their security postures.

Biometric authentication is no longer just the stuff of spy movies or reserved for military-grade installations. Nearly every person now carries a biometric-authentication device in their pocket, in the form of a phone. But here’s an interesting point of information from the report: The adoption for facial recognition (15 percent) lags far behind the adoption rate for fingerprint scanning (40 percent). Digging a little more deeply into the data, we also learn that consumers are far more comfortable with fingerprint scanning as a means of biometric authentication than with facial recognition. This raises the question of why consumers are less comfortable with facial recognition. To some extent, the answer lies in consumer perceptions—including misconceptions—about the technology and its implications for privacy and security. It’s time to address some myths about how facial recognition works, to help increase consumer comfort with biometric-authentication technology.

Biometric authentication is no longer just the stuff of spy movies or reserved for military-grade installations. Nearly every person now carries a biometric-authentication device in their pocket, in the form of a phone. But here’s an interesting point of information from the report: The adoption for facial recognition (15 percent) lags far behind the adoption rate for fingerprint scanning (40 percent). Digging a little more deeply into the data, we also learn that consumers are far more comfortable with fingerprint scanning as a means of biometric authentication than with facial recognition. This raises the question of why consumers are less comfortable with facial recognition. To some extent, the answer lies in consumer perceptions—including misconceptions—about the technology and its implications for privacy and security. It’s time to address some myths about how facial recognition works, to help increase consumer comfort with biometric-authentication technology.

Myth #1: The government-database privacy threat

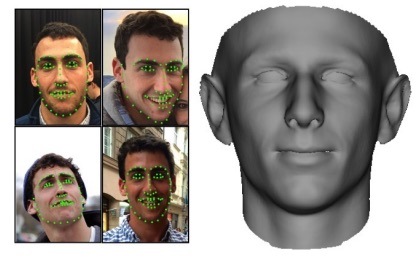

Recently, a colleague just back from vacation shared that the friend she traveled with refused to use the facial-recognition feature on her phone during their trip, because she believed it would result in a photo of her face being permanently stored in a government database. Let’s be perfectly clear: This is a myth. For one thing, the kind of facial recognition that’s used on phones today doesn’t employ photos for purposes of identification; instead, it relies on technology that creates a mathematical representation of the user’s face. Moreover, that representation is typically encrypted, locked away locally in the user’s device and not stored anywhere else—not in the cloud and not in an external database, government or otherwise.

Myth #2: The faker-photo security risk

Like the myth of user photos on a phone ending up in a government database, this one is tied to the mistaken idea that facial recognition technologies rely on photos to authenticate users. The fear is that all someone would have to do to gain access to a consumer’s secure applications or accounts is get their hands on that consumer’s photo and use it to pretend to be them—much as one might steal someone’s password and use it to get into password-protected resources. This myth does have at least some rooting in reality; an article in Wired last year reminded us that back in 2009, researchers fooled face-based login systems by simply holding a printed photo of the device’s owner up to the camera. But, again, that’s irrelevant when it comes to modern approaches to facial biometrics, because there is no photo. Rather, facial recognition is based on three-dimensional mapping of the user’s face. What you end up with is a complex series of data points that can’t be easily spoofed.

Myth #3: The stolen-identity danger

That brings us to the last myth, which is that someone could use the data points used to map a consumer’s face to actually recreate that face—and thereby steal the identity associated with it. Why, for example, couldn’t a bad actor steal that “map” of the user’s face and print a 3D mask of the user from it? Simple: Because the map is turned into a mathematical representation that cannot be reverse-engineered. When you present your face as authentication, the technology creates a mathematical value for it, and that value is used for comparison purposes to determine if it’s the same face the device originally “learned.” So you have a situation where it’s not easy to access the data in the first place, and even if someone could, they couldn’t use it in that form to recreate a face. That’s just not how the technology works.

It’s understandable that consumers would have doubts and fears about facial recognition; after all, it hasn’t been around long enough to establish a solid history of success as a means of authentication that can be trusted. And early incarnations of the technology did have security issues, such as being able to be fooled by a photo. Any new technology application has to grow and evolve, and the use of facial recognition as a form of biometric authentication certainly has. Acceptance takes time, along with reliable information and education about how the technology really works. Dispelling myths and misconceptions is an important step along the way.