Zeus. Gauss. Stuxnet. Flame. Blackhole. Java 0-day. IE 0day.

We have been inundated lately with a series of technical vulnerabilities that affect the environments of organizations around the world. The technical threat is real, active, and seemingly unavoidable.

But let’s not forget that external attacks are not our only worry. Most security nuts have been saying for years that our biggest threat is not external. The “insider threat” is the one that we have to worry about, right. I will take that a step further when I repeat myself. One of the biggest threats that we face on an ongoing manner is “the oops”. I have addressed this in a previous SecurityWeek column.

If I try to think back over the 10 biggest security breaches where I was personally working with the organization in question, here is how they break out:

1. Oops. A senior level user connected his personal computer to the corporate network and unknowingly infected the organization from his own computer that had been previously infected via an emailed Trojan Horse. When he shared files between the systems, his own email client mailed out copies of the Trojan Horse to “ALL”. The “oops” comes in the fact that it never occurred to him that he could infect work from his home computer. He just assumed corporate security would take care of everything.

2. Oops. User forgot to disable the login information for a departing user. The user logged back in 14 months later, and was able to use “known” standard internal passwords to log on to additional internal systems. The “oops” part is the fact that “user removal” was not on their departure checklist.

2. Oops. User forgot to disable the login information for a departing user. The user logged back in 14 months later, and was able to use “known” standard internal passwords to log on to additional internal systems. The “oops” part is the fact that “user removal” was not on their departure checklist.

3. Oops. User forgot to disable the login information for a departing user. The departed user was selling astrologic related items through a website that he had hosted on the corporate web server. The “oops” was the fact that they completely omitted the “remove user” step from their internal checklist. Everything else was done and submitted, and the checklist was eventually signed off, with the “remove user” box still unchecked.

4. Internal Breach via an internal user who had modified a branch of the corporate website to also sell pirated satellite equipment. When he was discovered, he was able to delete several key corporate files before he was stopped.

5. External Breach due to vulnerable services and insecure architectures. Technically, the breach probably happened due to unpatched systems, so we might be able to move this to an “oops”, but the actual breach was due to a series of external technical attacks that allowed the compromise of several external facing systems that allowed connection to the internal network.

6. Oops. A user added a “proof of concept” network server to the outside of the firewall instead of on a DMZ or the inside, exposing the unsecure system which was also connected to the internal network, allowing attackers to gain access to the internal network, bypassing corporate firewalls. The “oops” was easy enough to identify – the server was even plugged in using the corporate standard “red means external” cable.

7. Internal Breach as a departing employee stole engineering information on new products, including product specification and materials lists, and went to work for a competitor.

8. Oops, as IT staff deliberately added a route around external firewall, because they considered it too much trouble to maintain the actual firewall rules. For reference, the bypass route had been in place for five years before it was finally discovered and exploited by an attacker. So, while the actual breach was due to an external attack, I count the fact that IT staff actively supported the bypass for over five years as an “oops”.

9. Internal Breach via a team of internal users scheming together to commit financial fraud. Three users were involved in setting up fake payee accounts, creating fake invoices, approving payments, and performing wire transfers that they then used for their own purposes. As best as we could tell, this had been going on for several years before it was uncovered by accident because someone else saw an invoice that included a business address, when the person in fact knew that there was no such business at the vacant lot next to their house.

10. Oops. A team of internal users installed an “R” rated version of a “DOOM” type FPS (First Person Shooter) game on servers that were exposed to the Internet, then actively supported a gaming culture that included allowing unauthorized users onto the internal network without authentication – in order to play the game.

Okay, that may not be the “best” 10, but as I sit here and think about breaches that I have been involved with after the fact, these are a pretty solid set of 10. That includes six “oopses”, three internal breaches, and one external breach. Are my numbers perfect? Probably not since they come from a sample size of perhaps 30-35 total breaches, but I suspect they are reasonably representative. At least, I am going to assert that they are.

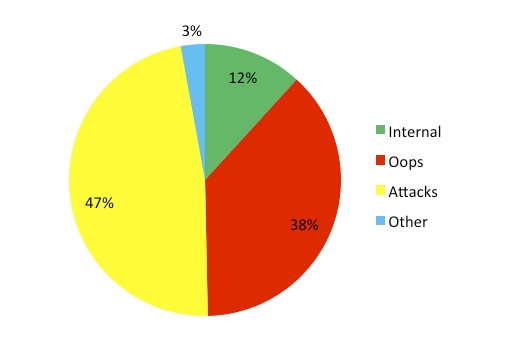

As I did in the earlier article, let’s take a quick look at statistics from the PrivacyRights.org which includes reported breaches, as well as all breaches from the www.hhs.gov websites. I did the same thing with all of the categories, and simplified the list down to four categories of breaches:

1. Internal – Breach from an authorized insider

2. Oops – Lack of awareness, gap in technical skills, gap in security skills, lapse in judgment, accident, mistake, sheer stupidity, full moon

3. Attacks – Hacking attacks, malware, physical break-in and theft

4. Other – Breaches that could not be otherwise well categorized

Perhaps my recategorization is not perfect, but it is not meant to be. The message is close enough. If you add up the number of oopses and Internal breaches, you exceed the number of attacks. Even if my numbers are off by 15%, the numbers of breaches are close.

As of September 6, 2012, the database includes 3368 reported breaches. Of those breaches, 1276 of them, or about 38%, appear to be related to an “oops”. Please note that does take some license with the numbers as reported. I did a relatively quick review of the breaches and lumped them into “oops” if I thought it was more appropriate than the category in which the breach was original located. For instance, when the reported “Physical” breach reports that 16 boxes of medical records were uncovered in a dumpster, I am going to make an executive decision to call that an “oops” rather than a physical security breach. I will allege that the source of the breach is either the “oops” of throwing the PHI into the dumpster instead of disposing of it properly. Or, at the very least, in the hiring of the idiot who actually threw the 16 boxes into the dumpster.

The current numbers worry me for two reasons:

1. In March of 2011, the privacyrights site showed 2374 reported breaches from 2005. In September of 2012, the same site reports 3368 breaches. That is a 41% rise in reported breaches in the past 18 months (which is an even bigger shock if you consider that breach growth in previous years has been more like 15-20% annually). Some of this may be because we are better at identifying and reporting breaches. After all, breach reporting laws are more common now, and the ones that were in place in March of 2011 are probably seeing better compliance. But, some of this is may actually be because the number of breaches is rising. You have to admit, from the perspective of technical attacks, it has been a tough year. (41%!)

2. The ratio of oopses to attacks appears to be more or less the same as it was in 2005. In 2005 I counted out to “more than 30% of the reported breaches were due to users errors”. I would not be opposed to an argument that the difference from 30-38% over the past 18 months is not statistically relevant.

The overall message I get is that things are getting worse (attacks are up, and increasing at a higher rate) and that we are not getting better about dealing with the things that should be easy. It is important that all of our systems are patched to eliminate technical flaws, but we have to remember that it is just as important to make sure that our users are enabled with proper policies as well as security and technical training.

Related Reading: Security Awareness Training: It’s The Psychology, Stupid!