Researchers with the University of North Carolina at Chapel Hill have demonstrated a new method of successfully bypassing modern face authentication systems.

Earlier this month, researchers Yi Xu, True Price, Jan-Michael Frahm, and Fabian Monrose presented their findings at the USENIX Security Symposium in Austin, Texas, and have published the research in a paper (PDF) entitled Virtual U: Defeating Face Liveness Detection by Building Virtual Models from Your Public Photos.

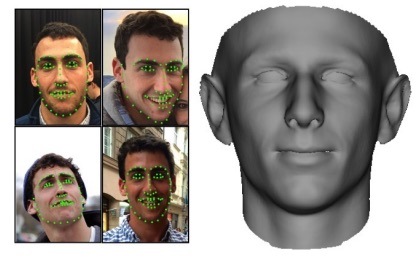

The novel approach to fooling face authentication systems relies on creating realistic, textured, 3D facial models based on pictures that the target user has shared on social media. The used framework leverages virtual reality (VR) systems and includes the option to create animations for the facial model, such as raising an eyebrow or smiling.

These options are efficient in tricking detectors into believing that the 3D model is a real human face.

“The synthetic face of the user is displayed on the screen of the VR device, and as the device rotates and translates in the real world, the 3D face moves accordingly. To an observing face authentication system, the depth and motion cues of the display match what would be expected for a human face,” researchers explain.

“The synthetic face of the user is displayed on the screen of the VR device, and as the device rotates and translates in the real world, the 3D face moves accordingly. To an observing face authentication system, the depth and motion cues of the display match what would be expected for a human face,” researchers explain.

Cybercriminals can leverage this method in VR-based spoofing attacks, which reveals a serious weakness in camera-based authentication systems. According to researchers, these systems need to incorporate other sources of verifiable data as well, otherwise they are prone to attacks via virtual realism.

Face authentication systems have become increasingly popular over the past few years, being used in a variety of applications, ranging from authenticating financial transactions to securing mobile devices and desktop computers. Although they have seen significant improvements, face recognition technologies can still be spoofed and require robust security features beyond mere recognition to avoid that, researchers argue.

To avoid spoofing attacks, these systems are employing security measures that include texture-based approaches (which assume that spoofed faces have a different texture), motion-based approaches (where the motion of the user’s head is used to infer 3D shape), and liveness assessment techniques (which require the user to perform specific tasks during the authentication process).

Motion-based systems and liveness detectors are used in combination and the approach has already gained traction, researchers say. However, they also explain that personal photos from online social networks can not only compromise privacy, but can also undermine face authentication systems. By using the correct approach, an attacker can also leverage these photos to bypass face liveness detection, researchers say.

The researchers created a 3D facial model that could also mimic movement, and tested their findings on commercial authentication systems with the help of 20 volunteers. By using real social media photos from these users, researchers were able to break five such systems “with a practical, end-to-end implementation” of their approach.

The systems that the researchers were able to bypass are KeyLemon, Mobius, True Key, BioID, and 1U App.

“All participants were registered with the 5 face authentication systems under indoor illumination. […] We thus captured one front-view photo for each user under the same indoor illumination and then created their 3D facial model with our proposed approach. We found that these 3D facial models were able to spoof each of the 5 candidate systems with a 100% success rate,” the researchers explain.

When the 3D facial models were created using photos taken from the social media, the successful spoof rate was lower, but high enough to show that the approach works. 1U App came on top with a 0% spoof rate, but the rest of the facial recognition systems failed. The spoof rate was of 55% for BioID and kept on growing: 70% for True Key, 80% for Mobius, and 85% for KeyLemon.

However, researchers say that the low spoof success rate for 1U App and BioID was directly related to the poor usability of these two systems. After testing the systems, the researchers discovered that “the indoor/outdoor login rates of BioID and the 1U App were 50%/14% and 96%/48%, respectively.” Apparently, these systems have difficulties in authenticating users when the environment changes, which explains the high false rejection rates under outdoor illumination.

“It appears to us that the designers of face authentication systems have assumed a rather weak adversarial model wherein attackers may have limited technical skills and be limited to inexpensive materials. This practice is risky, at best. Unfortunately, VR itself is quickly becoming commonplace, cheap, and easy-to-use. Moreover, VR visualizations are increasingly convincing, making it easier and easier to create realistic 3D environments that can be used to fool visual security systems,” the researchers say.

Related: Survey Shows Users Ready for Biometric Payments