IBM today announced the release of an open source software library designed to help developers and researchers protect artificial intelligence (AI) systems against adversarial attacks.

The software, named Adversarial Robustness Toolbox (ART), helps experts create and test novel defense techniques, and deploy them on real-world AI systems.

There have been significant developments in the field of artificial intelligence in the past years, up to the point where some of the world’s tech leaders issued a warning about how technological advances could lead to the creation of lethal autonomous weapons.

Some of the biggest advances in AI are a result of deep neural networks (DNN), sophisticated machine learning models inspired by the human brain and designed to recognize patterns in order to help classify and cluster data. DNN can be used for tasks such as identifying objects in an image, translations, converting speech to text, and even for finding vulnerabilities in software.

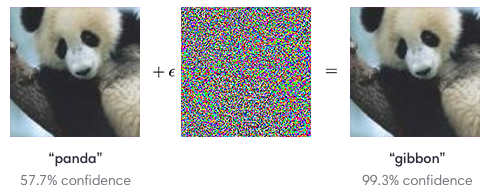

While DNN can be highly useful, one problem with the model is that it’s vulnerable to adversarial attacks. These types of attacks are launched by giving the system a specially crafted input that will cause it to make mistakes.

For example, an attacker can trick an image recognition software to misclassify an object in an image by adding subtle perturbations that are not picked up by the human eye but are clearly visible to the AI. Other examples include tricking facial recognition systems with specially designed glasses, and confusing autonomous vehicles by sticking patches onto traffic signs.

IBM’s Python-based Adversarial Robustness Toolbox aims to help protect AI systems against these types of threats, which can pose a serious problem to security-critical applications.

According to IBM, the platform-agnostic library provides state-of-the-art algorithms for creating adversarial examples and methods for defending DNN against them. The software is capable of measuring the robustness of the DNN, harden it by augmenting the training data with adversarial examples or by modifying its architecture to prevent malicious signals from propagating through its internal representation layers, and runtime detection for identifying potentially malicious input.

“With the Adversarial Robustness Toolbox, multiple attacks can be launched against an AI system, and security teams can select the most effective defenses as building blocks for maximum robustness. With each proposed change to the defense of the system, the ART will provide benchmarks for the increase or decrease in efficiency,” explained IBM’s Sridhar Muppidi.

IBM also announced this week that it has added intelligence capabilities to its incident response and threat management products.

Related: The Malicious Use of Artificial Intelligence in Cybersecurity

Related: The Role of Artificial Intelligence in Cyber Security

Related: Privacy Fears Over Artificial Intelligence as Crimestopper

Related: Bot vs Bot in Never-Ending Cycle of Improving Artificial intelligence