How Combining Two Completely Separate Open Source Projects Can Make Us All More Secure

When you run an application, how can you verify that what you are running was actually built from the code that a trusted developer wrote?

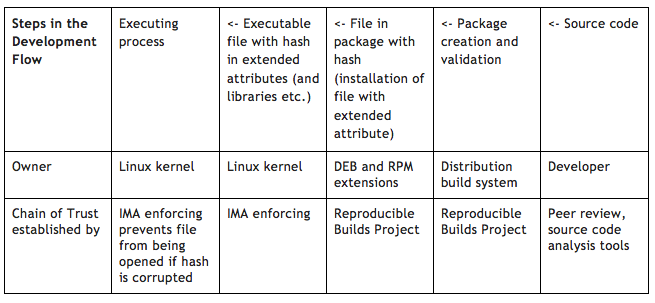

Imagine that an open source developer writes the code for the software and publishes it on Github or another a website. You, the end user, can download the software right from that location, compile it, and run it. You believe that what you’re running is what the developer wrote because you cloned the repo via a secured channel or you verified the source package that you downloaded via the project website by comparing the hash of the file that you downloaded against the value that the developer published on their website and signed with a PGP key (despite what security folk want to believe, it is often hyperbolically claimed that nobody actually does this).

When you get the application from a distribution rather than from the developer, you are trusting that the distribution performed the same validation that you would have done (or better). Now you, as the end-user, get the installable package from the distribution. You install it on your system and you run the executable contained within the package. You don’t have to compile it because that is one of the value-added functions of the distributor. The package management system handles the verification of the package before it is installed.

When you get the application from a distribution rather than from the developer, you are trusting that the distribution performed the same validation that you would have done (or better). Now you, as the end-user, get the installable package from the distribution. You install it on your system and you run the executable contained within the package. You don’t have to compile it because that is one of the value-added functions of the distributor. The package management system handles the verification of the package before it is installed.

Now, as the end-user, how do you know that the executable that you were running is what the open source developer originally wrote? Many distributions alter the code before they ship it to apply bug patches and security fixes. How does the distributor know the the code in the package is compiled of the code from the developer plus their fixes? In most cases, distributions have automated build systems that take the code and emit packages. But what if one of the systems in the build system is corrupted? How do the developer, the distributor, and you figure this out?

The Reproducible Builds Project intends to solve that problem. The goal of the Reproducible Builds Project is to ensure that if a package is built on one system and then again on a different but similar system, the outputs are directly comparable. This can be verified using the diffoscope tool produced by the project or, in many cases, by comparing cryptographic hashes of the two packages to ensure that they are identical.

It is harder to achieve reproducibility than it is to verify reproducibility because many packages are not reproducibly buildable for a variety of reasons. These reasons the include the inclusion of timestamps somewhere in the pacakge, alternate build directories, differences in the versions of the build tools, variable directory inclusion, and other small details of the build system that get included into the final package.

The Reproducible Builds project is writing tools to facilitate comparison of two separately generated packages, fixing toolchain issues, and working with the upstream developers to fix any problems which cause the source not to be reproducibly buildable. Subtle corruptions of individual machines in a build system become detectable when previously reproducibly buildable packages suddenly start failing verification.

In summary, the Reproducible Builds project is working to ensure the package will be identical to packages built on another similar system using the same source code so that the integrity of the package can be validated. Packages that can be reproducibly built include deb and RPM packages, which carry within them the cryptographic hashes of the files that are included in the packages. This includes the executable as well.

The Integrity Measurement Architecture (IMA-appraisal) component of the Linux kernel has the capability of validating a file’s integrity based on the file’s signature stored as an extended attribute, before allowing the file to be accessed (for example, before a file can be executed or a library loaded). For the signature validation to succeed, the file signature’s public key must be on the IMA keyring. Only trusted keys, those keys signed by a key on the system keyring, may be added to the IMA keyring.

Soon it will be possible to enroll the signed hashes from the package management system as IMA attributes during the installation process. Then, if you configure your system to be IMA enforcing, you will know that every running application came from your trusted distribution.

If your trusted distribution uses reproducible builds, then you will be able directly trace the chain of integrity of the executing process back to the original code and know that the code has not been subverted during delivery. Of course, you still have to protect your system keyring, IMA keyring and policy, trust the compiler, and trust (or validate) that the developer is producing code without backdoors, but what you have is verifiable evidence of correspondence between the executing process and the source code, which is a new level of integrity.

This model of integrity is not completely realized today. Some steps are not yet complete – IMA is still considered experimental, patches to Debian packaging system to include signed file hashes have been submitted, but not yet accepted, the Reproducible Builds Project has made amazing strides but work has not yet been completed.

This vision for a full chain of integrity from developer to executing process is taking shape and is tantalizing close to realization. I look forward to the day when we can run our applications with the full knowledge that they come to us intact and as intended by the developer or the distribution.