In the current threat landscape, where cyber-criminals are increasingly using automation to carry out their attacks, an automatic security reaction seems to be inevitable, as mitigating attacks with a manual process would be as effective as bringing a knife to a gunfight. However, a carelessly designed automatic security reaction can be dangerous too.

In popular culture, the most famous example of an automatic security reaction gone awry is the “Doomsday device” presented in Stanley Kubrick’s film Dr. Strangelove’s. In the film, the Soviets set a Doomsday device consisting of 50 buried bombs with “Cobalt Thorium G” set to detonate should any nuclear attack strike their country in order to deter the west from launching a successful nuclear first strike. However, the automatic nature of the Doomsday device does not take into account any other possibility for a nuclear attack besides a preemptive American strike by thus allowing a single mad officer to set a chain reaction that brings the world to annihilation.

Examples of dangerous automatic security reactions turning into a liability are not limited to science fiction; In fact, the recent Snowden affair of publicizing some of the NSA’s secrets gives at least two examples of such liabilities for both parties engaged in this battle.

Snowden’s Dead Man’s Switch

According to the Guardian’s reporter, Glenn Greenwald, Snowden “has taken extreme precautions to make sure many different people around the world have these archives to insure the stories will inevitably be published.” Greenwald added: “If anything happens at all to Edward Snowden, he told me he has arranged for them to get access to the full archives.”

This “dead man’s switch” is supposed to deter the US from harming Snowden, but as observed by Bruce Schneier it may actually create new threats to Snowden’s security. In the words of Bruce: “[if I were Snowden then] I would be more worried that someone would kill me in order to get the documents released than I would be that someone would kill me to prevent the documents from being released. Any real-world situation involves multiple adversaries, and it’s important to keep all of them in mind when designing a security system.”

The DHS Data Leakage Prevention

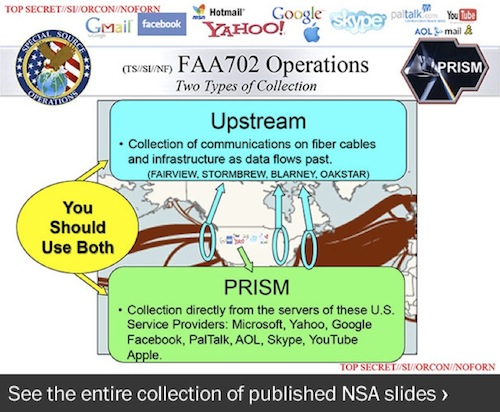

When the Washington Post published a slide of Snowden’s leaked NSA slide, the Department of Homeland Security (DHS) had warned its employees that viewing the document from an “unclassified government workstation” could lead to administrative or legal action. “You may be violating your non-disclosure agreement in which you sign that you will protect classified national security information,” the DHS communication said.

NSA’s leaked slide – If you’re a DHS employee then your computer is classified as “top secret”

While at first glance the DHS’reaction might look like “DHS Puts its Head in the Sand”, taking a deeper look might let us make an educated guess on the reason behind it.

Deducing from the memo language, we learn that the DHS has (at least) two networks, one for classified information which is probably not connected to the Internet and an unclassified one that has Internet connectivity. These networks are likley isolated from each other with an “air gap”. To make sure that classified information is not leaked by mistake from the classified network to the unclassified one, the top secret documents are digitally watermarked and a pre-installed software agent on the unclassified workstation is monitoring all the station’s documents for the watermark. When it discovers such violation, it sends an alert to DHS’ Security Operations Center (SOC) and the SOC now needs to mitigate the problem by first disconnecting that station and later recovering, if possible, the workstation so it would become “unclassified” again.

If this guess is correct then in the morning when the Washington Post published the NSA’s leaked top secret slide, the DHS’ SOC got swamped with alerts. It happened since many DHS’ employees have read the article on their unclassified station. The installed agent on the station detected the watermarked top secret document and sent an alert to the SOC. The SOC got swamped with alerts and was not able to monitor real security incidents. Therefore the DHS was forced to tell its employees to refrain from viewing that specific page.

The root cause for this failure is that the DHS’s data leakage prevention security policy has only taken into consideration the possibility of a top secret document arriving to unclassified station as a result of information leakage from the government’s classified network. However, in this case the source of this “top secret” document was actually the Internet, by thus breaking one of the system basic assumptions and making it fail totally.

Assumptions are dangerous, making automatic decisions based on them can be even worse

Summing up, in complex real world situations which involve multiple adversaries, such as the current Internet security arena, it’s important to keep all of them in mind when designing a security system. Since this is a very hard task, we need to be ready for the case that one of our assumptions fails. Therefore, we should be very careful when we deploy a new automatic security mechanism, as automatic mechanisms are especially vulnerable when one of their underlying assumptions breaks.