This episode is a bit of grab-bag of important points that didn’t seem to fit anywhere else. Worse, some of it is going to be my opinion! I’ll try to carefully delineate what is my opinion from what are facts, so we know what to argue about.

One important thing to remember about your security metrics program is that it’s a long-term undertaking. If you want to measure something, the only way to isolate local fluctuations is to have long-term data. It really doesn’t make sense to talk about “spikes” in data that you’ve only been keeping for a few months. In other words, to expose long-term trends, you need long-term data. So the best thing you can do is hop in your time machine, go back a couple years and slap your then-self upside the head and tell your former self to start a metrics program right after you mortgage the house and buy stock in Apple.

When you start keeping a long-term data series, remember that you can’t change your algorithm for interpreting it on a regular basis. If you keep tweaking how you process the data, you’re not actually keeping a long-term data set at all; you’re keeping a series of snapshots with different settings, unless you retain the underlying data and reprocess it using your new analytics each time. If you do that, it’s a good idea to keep using your old analytics in parallel because you’ll be better able to detect changes over time in the old analysis than in the new — you may be subconsciously using the new analysis to skew your own perceptions. On the other hand, you could also be omitting something important from your old analysis as a result of something new coming along.

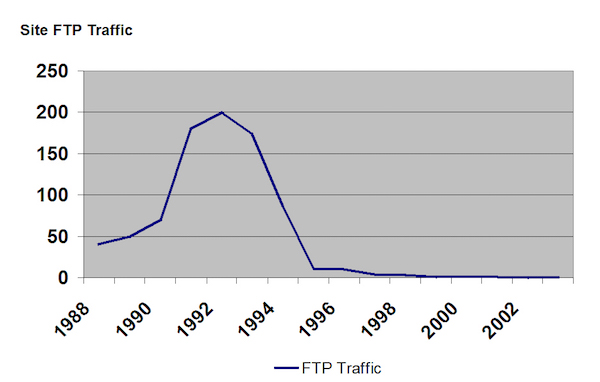

For example, if I had a chart that looked like this (Warning: fabricated numbers!):

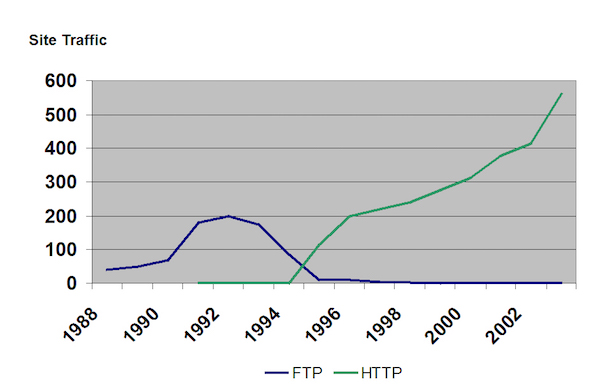

That’s the mythical FTP service on some site, and we might conclude there’s something really wrong with it, because it drops off rather catastrophically. But obviously, that’s because my old data set didn’t include the addition of a new type of data that came along around 1994:

This picture actually tells a more interesting story than if I just charted HTTP or FTP numbers alone —- it also shows a change in our underlying technologies. If I wanted to appeal to my viewer’s ego (or perhaps this presentation was to show our Director of Marketing something about “outreach”), I might throw in a dotted line indicating total accesses.

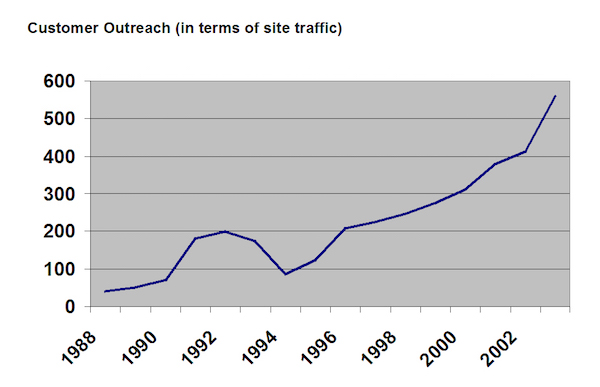

That brings up another topic: when you’re telling a story, the plot should build along a story arc just like a good movie or a well written novel. I might tell that story by showing a chart like this:

I could explain that this chart is a merger of our site exposure through FTP and later via the Web “See that downturn around 1994? That’s where we didn’t jump on the Web fast enough and fell off the landscape for a little while.”

I could show the previous slide with the inflection points at the technological switchover and explain that this is why we need to start using Twitter (or whatever the latest and greatest technology is this week). The best thing is that this is a pretty honest analysis! I often find myself sitting in meetings where someone says, “We should do XYZ because.. uh… STUFF!” This is a set of numbers that tells a story that is accurate, specific and compelling, if not interesting.

Think about the order in which you want to build your data. Perhaps you might start out with some charts that show vulnerability rates across your enterprise (presumably, holding steady or trending downward) and then pop up a break-down by business unit. Let’s say you want to do a brutal hatchet job on one business unit that does their own system administration and that has a higher vulnerability rate. You can present a chart that says, “This is what our vulnerability rate looks like,” and then another that shows, “This is what our vulnerability rate looks like with such-and-such business unit factored out.” Sometimes it’s best to present a summary and then explode it out into the individual data sets; other times it’s better to start with individual data items and roll them up. Think of the story you’re trying to tell and decide which way is better.

One other important factor in building your metrics story is to keep it credible. That’s why I don’t recommend tying metrics to financial models unless they are very cut-and-dried.

Let’s say you work for Acme Widgets and stand up and say, “I can save us five million dollars!” That’s a great way of trolling a meeting for attention, but you’re less likely to get tripped up if you say, “I think I have identified a place where we’re losing production.” Then let everyone do their own cost estimates. Whenever I’m trying to present a metric that shows a business impact, I usually try to map it to the business impact it shows and leave it there. For example, head count is a good unit of measurement in a lot of security metrics — don’t try to extrapolate how much an FTE costs — any manager will do that automatically without any prompting from you.

That’s a good example of building your metric on established facts. At certain levels of an organization you can expect someone to be able to extrapolate an FTE (which depends on location, business type, etc.) or floor space or rack space, etc. Each business unit has its own units of measurement and that’s how you connect your metric to what they are doing. Frequently when security people say, “We need to learn to talk in the language of business,” that’s the underlying issue: you need to tie your metrics to the unit of measurement that is most relevant to your audience. In fact, if you can’t do that, you should consider the scary possibility that the information is not actually relevant to them at all.

Lessons:

• Think about the order in which you want to build your data

• Long-term is better than short-term; go back in time

• Buying Apple stock four years ago would have been a good move

• Build the foundation of your metric on known facts

Next up: More tales of white-knuckled security metrics